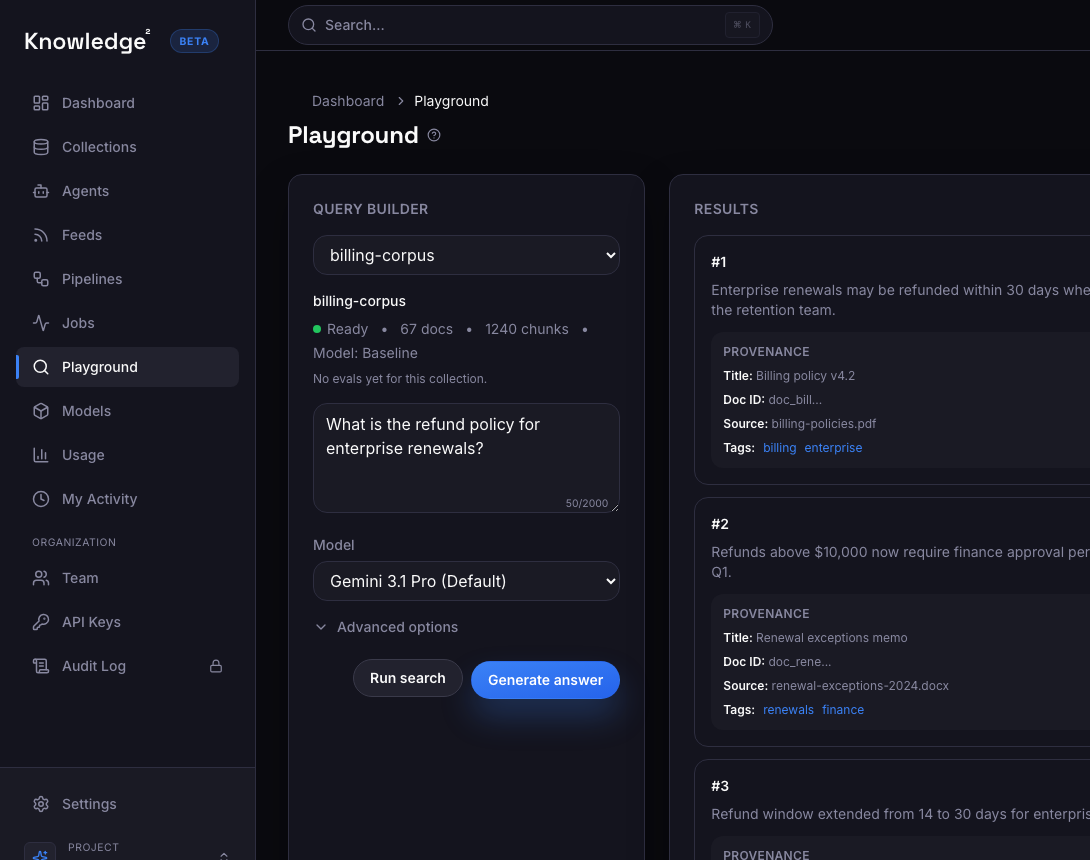

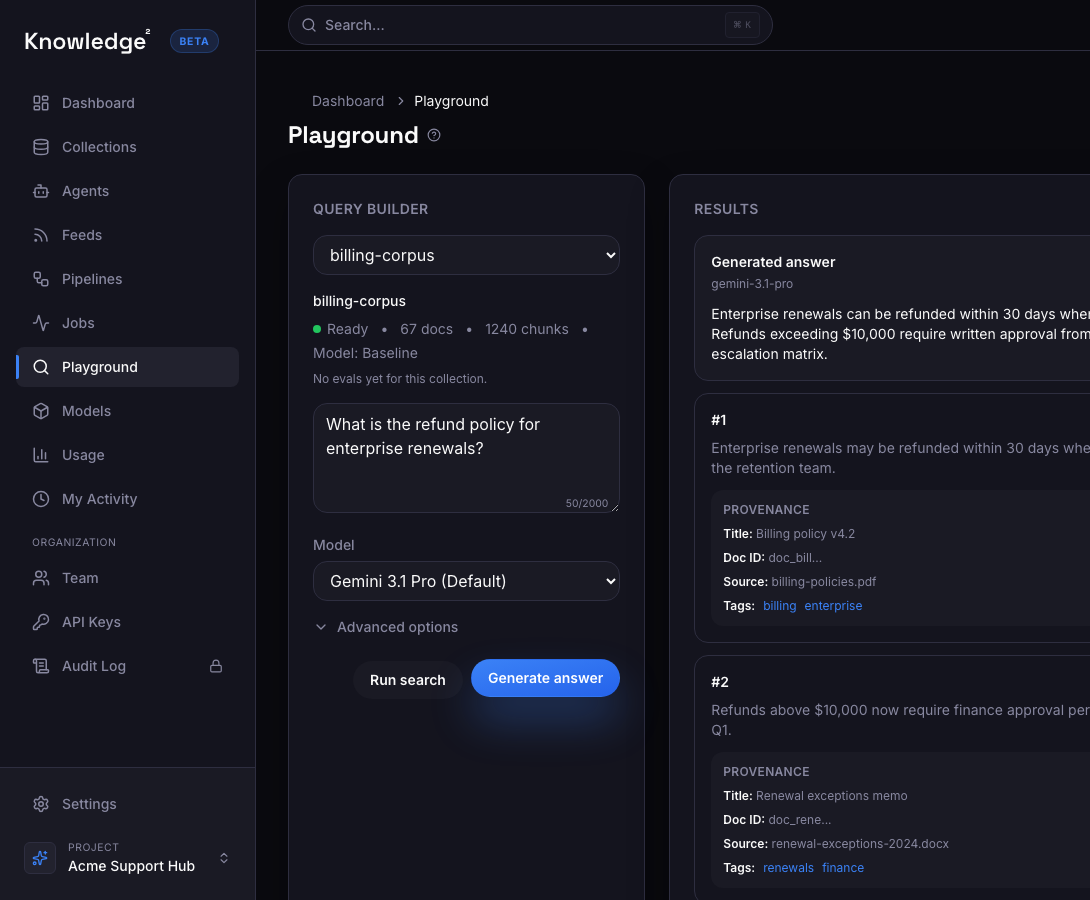

Example user experience

A support rep checks refund eligibility

The answer is direct, backed by data, and immediately useful.

Question

What's changed in our refund policy for enterprise renewals?

Grounded answer

Enterprise renewals can still be refunded within 30 days when the account is under active review. Refunds above $10,000 now require finance approval.

- Source: Billing policy v4.2

- Source: Renewal exceptions memo

- Updated: 2 days ago